What do we think? That’s one of the most fundamental questions in philosophy. Its debate is in the history of philosophy since before Plato’s time (yes, even before Plato). In the philosophy of mind, however, philosophers are more interested in finding out how we can come to know our own minds, through introspection and by observing other people’s actions and speech. Connectionism is an attempt to explain how this might be possible. Although we don’t all have the same model of the mind. We all have minds that work similarly enough to make generalizations about how we think possible.

Defining connectionism

The term connectionism refers to a set of ideas and theories in psychology. It has been growing over the past decade or so. The central idea behind connectionist thought is that mental processes are, in some way, analogous to processes occurring in computers.

In other words, mental activity functions more like an information route through a computer network. Then it does like a car following directions on a map. Our brains don’t contain distinct bits of information stored away somewhere. But instead, operate by means of patterns and associations that can week by the experience. When we learn something new, or when we remember something familiar. For example, our brains simply learn how to make those things easier to recall again later on. There’s no little filing cabinet full of memories stuffed inside your head!

History of Connectionism

The first step toward connectionism was in 1943 by Warren McCulloch and Walter Pitts. They created models of neurons with weighted inputs. Later, in 1959, Rosenblatt introduced a two-layer artificial neural network (ANN) model called Perceptron that could classify simple patterns; it became one of the most important models in connectionist research and development.

Recently, interest grows substantially as ANNs apply successfully to fields as diverse as economics, biology, mathematics, and engineering. Today’s ANN applications include computer vision, speech recognition, and game playing. Artificial intelligence (AI) experts predict that ANN will play an increasingly significant role in future AI developments.

Parts of the connectionist network

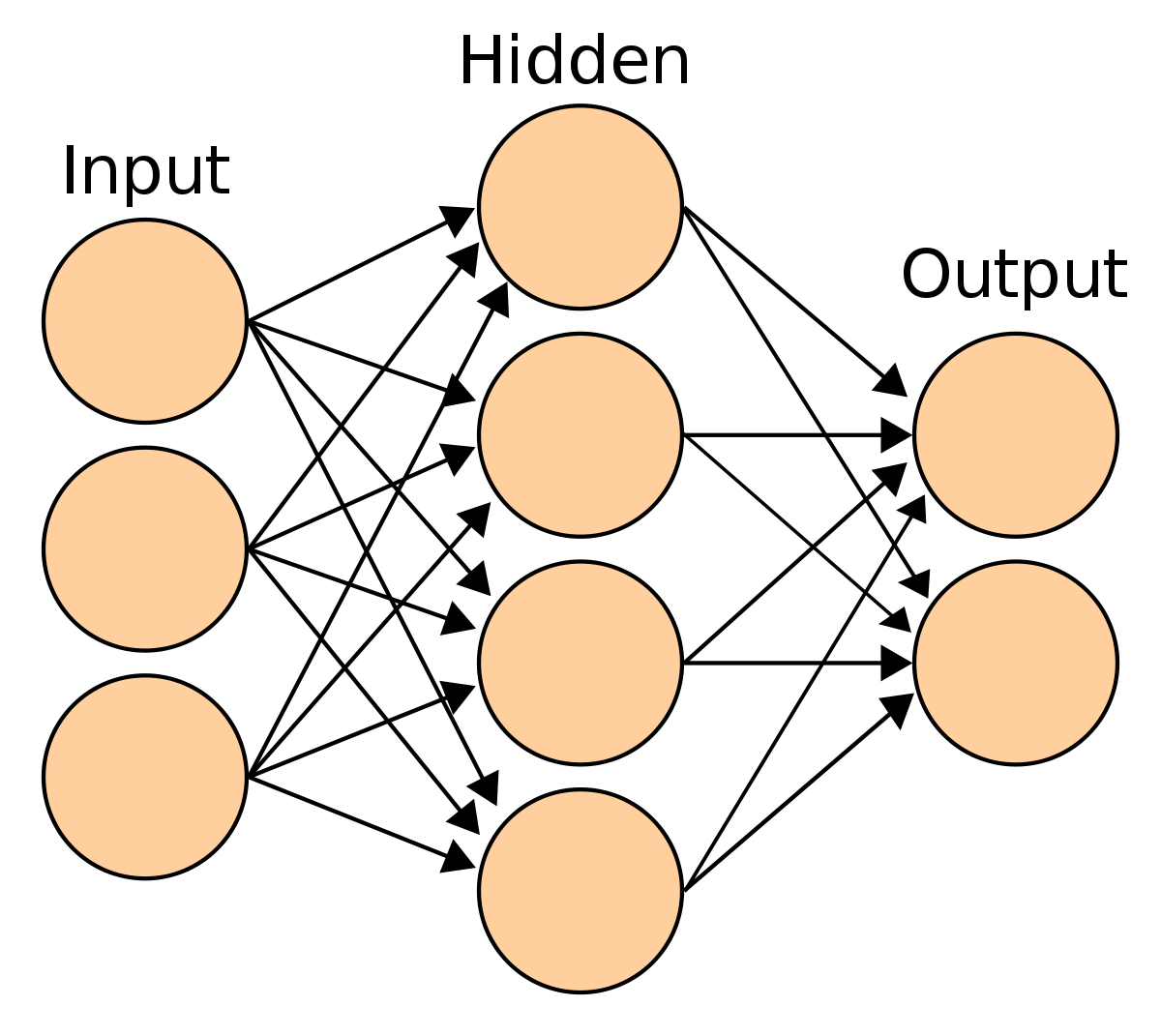

There are three important parts of connectionist networks: Neurons, weights, and activation function. Neurons can be seen as nodes in a directed graph where connections between them are represented by edges. These edges have a numerical weight associated with them which represents how strong of an edge it is. The weight can vary depending on certain factors such as age or social status.

If a neural network is fully connected then all neurons in that network connect to all other neurons; otherwise, it may contain sparsely connected regions where some nodes (neurons) do not connect to other nodes at all. At each node, there exists an activation function or transfer function which takes in weighted inputs and produces either an output value or values or generates changes to other variables.

Properties of a connectionist network

A connectionist network possesses some general characteristics. Some of these are as follows:

- The cells in a neural network interlink to one another. Usually in an acyclic directed graph or some other kind of multi-graph. So that signals may propagate from any cell to any other cell.

- The connections between cells form associations between their inputs and outputs, such that each input pattern evokes a specific output pattern by modifying some of its synapses.

- Cells do not have separate processing capacities. Rather, information is distributed across networks, and functions are determined by inter-network interactions within that information rather than internal elements.

- There can be multiple input layers, multiple hidden layers, and a single output layer.

- Networks may contain loops.

Limitations of Connectionism

In general, connectionist models are known to have limitations in a number of areas. They do not always produce reliable results. Further, there are relatively few commercial applications for these models because of problems such as low tolerance for errors and high sensitivity to initial training patterns. Often, these models are difficult to interpret, which means that users need advanced knowledge and expertise in order to use them effectively.

Many connectionist systems cannot easily adapt to new situations; their static nature makes it difficult for them to learn from experience or from input data that changes over time. Additionally, some researchers have shown that these types of networks tend to exhibit performance degradation when new data does not fit into pre-existing structures or patterns.

More Recent Approaches

According to Marvin Minsky, one of connectionism’s principal architects, The theory of connectionism began with three observations: that it is easy to program computers to solve problems involving ‘local’ connections but hard to program them to solve problems based on ‘non-local’ connections; that we can do complex non-symbolic calculations in our heads; and that animal brains seem very different from digital computers.

In other words, human brains are really good at coming up with solutions based on experience and context rather than hard-coding a direct solution. The question for AI researchers was how could they recreate these abilities in an artificial system.

Challenges of Neural Networks

Neurons are extremely simple computational units, but they’re not that efficient. A single neuron can only perform a few basic operations: input, output, and weighted sum. When a neural network contains hundreds or thousands of neurons—which isn’t unusual in larger networks—it quickly becomes difficult to manage all of those connections effectively.

Instead of working on smaller parts, connectionists decided to work on larger whole units: entire layers at once. This kind of processing generally works much faster than neuron-by-neuron computation. For instance, one layer can add two numbers together while another layer can multiply two different numbers together—and these individual operations will still collectively give you the same answer as if each calculation was computed by each neuron individually.

Benefits of Using Artificial Neural Networks

Neural networks are super helpful because they can, essentially, solve any problem that’s computable. This means that any function or process (including vision, speech, and pattern recognition) that you can describe mathematically can be tackled by a neural network. So what are some of those benefits? Let’s take a look at six major benefits of using artificial neural networks

- Easy to use: It’s easy to set up a neural network. There are many different types of neural networks, but most have similar structures and learning algorithms. Plus, they don’t require much in terms of resources—the only thing you need is a computer with an internet connection!

- Scalable: If your data set gets too big for one machine to handle, it won’t be a problem—you can easily run multiple machines on one task. You might even want to set up multiple machines on different computers just so your network has more computing power than one machine could provide!

- Flexible: The learning algorithms used in these models allow them to adapt quickly as new information comes in.

Final verdict

In psychology, connectionism is a research tradition that attempts to explain mental phenomena using artificial neural networks. This approach can be seen as an alternative to other research traditions, such as those of behaviorism and cognitivism. The most well-known example of connectionist research has been in cognitive psychology and linguistics, with studies on speech recognition and language processing. However, more recently there has been the application of connectionist models to neuroscience and psycholinguistics research.

Connectionists are now increasingly applying their theories to real-world problems in computer science, engineering, business analytics, etc. since computational power for training large networks has become so much cheaper than before.